Tech Trends Newsletter - 2026-02-18

Topic: AI Coding / Vibe Coding, LLM Agent / MCP, AI Developer Tools, AI Platform Updates

Highlights

- Anthropic Claude Opus 4.6 & Sonnet 4.6リリース — Opus 4.6はTerminal-Bench 2.0で65.4%のSOTA達成、Sonnet 4.6は1Mトークンコンテキストでコーディング品質が大幅向上 → AI Platform Updates

- OpenAI Codexデスクトップアプリ & GPT-5.3-Codex — macOS向けマルチエージェント並列実行環境とSkillsシステムを搭載 → AI Platform Updates

- Google Antigravity IDE — Gemini 3搭載の「エージェントファースト」開発プラットフォームをパブリックプレビュー公開 → AI Platform Updates

- MCP、Agentic AI Foundationへ寄贈 — Anthropic、Block、OpenAIが共同設立。GoogleがgRPCトランスポートを貢献 → Developer Community

- Figma × Anthropic「Code to Canvas」 — Claude Codeで生成したUIをFigmaの編集可能なフレームに変換 → AI Platform Updates

Claude Opus 4.6

- Source: Anthropic

- Date: 2026-02-05

Claude Opus 4.6

We’re upgrading our smartest model. Across agentic coding, computer use, tool use, search, and finance, Opus 4.6 is an industry-leading model, often by wide margin.

www.anthropic.com

www.anthropic.com

Anthropicが2026年最初の大型モデルClaude Opus 4.6をリリース。1Mトークンコンテキスト(ベータ)、最大128Kトークン出力、Adaptive Thinking(動的推論モード)を搭載。Terminal-Bench 2.0で65.4%(前世代59.8%)、OSWorldで72.7%(前世代66.3%)のSOTAを達成。Claude Codeの「Agent Teams」機能により複数AIエージェントの並列作業が可能に。

“Opus 4.6 hits state-of-the-art on Terminal-Bench 2.0 (65.4% for agentic coding in the terminal), Humanity’s Last Exam (complex multidisciplinary reasoning), and BrowseComp (agentic web search).”

Claude Sonnet 4.6

- Source: Anthropic

- Date: 2026-02-17

Introducing Sonnet 4.6

Claude Sonnet 4.6 is a full upgrade of the model’s skills across coding, computer use, long-reasoning, agent planning, knowledge work, and design.

www.anthropic.com

www.anthropic.com

Sonnet 4.6はコーディング、コンピュータ使用、長文推論、エージェント計画、デザインの全領域でアップグレード。Claude Codeテストでは70%のユーザーがSonnet 4.5より4.6を選択し、さらにOpus 4.5に対しても59%で好まれた。1Mトークンコンテキスト(ベータ)搭載、価格は$3/$15 per Mトークンで据え置き。

“Users even favored it over Opus 4.5—the frontier model from November 2025—in 59% of comparisons, noting it was ‘significantly less prone to overengineering.’”

Figma × Anthropic「Code to Canvas」

- Source: Anthropic / Figma

- Date: 2026-02-17

Figma partners with Anthropic to launch ‘Code to Canvas’

The feature creates a direct bridge between AI coding tools such as Claude Code and Figma’s collaborative design platform.

startupnews.fyi

startupnews.fyi

FigmaとAnthropicが提携し「Code to Canvas」機能をローンチ。Claude Codeで構築したUIをライブブラウザ状態からキャプチャし、Figmaの編集可能フレームに変換。FigmaのMCPサーバー上で動作し、デザイナーとエンジニアのワークフローを橋渡しする。

“The integration grabs the live browser state and converts it into a Figma-compatible frame, and the captured screen lands on your canvas as an editable design artifact.”

MCP Apps ローンチ

- Source: Anthropic

- Date: 2026-01-26

Anthropic launches interactive Claude apps, including Slack and other workplace tools | TechCrunch

Claude users will now be able to call up interactive apps inside the chatbot interface, with Cowork integration coming soon.

techcrunch.com

techcrunch.com

AnthropicがMCP Appsオープン仕様をローンチ。MCPサーバーがインタラクティブなUIを提供可能になり、Claude内でSlack、Figma、Asana、Canvaなど10以上の業務ツールを直接操作可能に。Pro/Max/Team/Enterpriseプランで利用可能。

“MCP Apps is a formal extension to the MCP protocol that lets any MCP server deliver an interactive interface, not just data and actions.”

OpenAI Codex macOSアプリ & GPT-5.3-Codex

- Source: OpenAI

- Date: 2026-02

openai.com

openai.com

openai.com

OpenAIがCodexデスクトップアプリ(macOS)とGPT-5.3-Codexを発表。マルチエージェント並列実行、Skillsシステム(コード生成を超えた情報収集・問題解決・ライティングに拡張)、Worktreeサポート(同一リポジトリでの並列作業)を搭載。GPT-5.3-Codexは前世代より25%高速で、自身の学習・デプロイ・テスト評価にも使用された初のモデル。

“With GPT-5.3-Codex, Codex goes from an agent that can write and review code to an agent that can do nearly anything developers and professionals can do on a computer.”

Google Antigravity IDE & Gemini 3

- Source: Google

- Date: 2026-02

Build with Google Antigravity, our new agentic development platform

Google Antigravity: The agentic development platform that lets agents autonomously plan, execute, and verify complex tasks. Available now.

developers.googleblog.com

developers.googleblog.com

Googleが「エージェントファースト」開発プラットフォーム「Antigravity」をパブリックプレビューで無料公開。Gemini 3 Pro搭載、macOS/Windows/Linux対応。ユーザーはアーキテクトとして機能し、エージェントがエディタ・ターミナル・ブラウザを横断して自律的にタスクを実行。Claude Sonnet 4.5やGPT-OSSもモデルオプションとして利用可能。

“You act as the architect, collaborating with intelligent agents that operate autonomously across the editor, terminal, and browser.”

Google CloudがMCPにgRPCトランスポートを貢献

- Source: InfoQ

- Date: 2026-02-05

https://www.infoq.com/news/2026/02/google-grpc-mcp-transport/j

Google CloudがMCPにgRPCトランスポートパッケージを貢献。JSON-RPCの帯域幅消費とCPUオーバーヘッドを削減し、gRPCを標準プロトコルとする企業のAIエージェント統合を容易にする。Spotifyが既に社内でMCP over gRPCの実験的サポートを投資済み。

“Because gRPC is our standard protocol in the backend, we have invested in experimental support for MCP over gRPC internally.” — Stefan Särne, Spotify

MCP、Linux Foundation傘下のAgentic AI Foundationへ寄贈

- Source: Anthropic

- Date: 2025-12-09

Donating the Model Context Protocol and establishing the Agentic AI Foundation

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

www.anthropic.com

www.anthropic.com

AnthropicがMCPをLinux Foundation傘下の新団体Agentic AI Foundation(AAIF)に寄贈。Anthropic、Block、OpenAIが共同設立し、Google、Microsoft、AWS、Cloudflare、Bloombergが支援。MCP初年度の成果:10,000以上のアクティブ公開MCPサーバー、月間9,700万以上のSDKダウンロード。

“Ensure agentic AI evolves transparently, collaboratively, and in the public interest through strategic investment, community building, and shared development of open standards.”

Addy Osmani「My LLM coding workflow going into 2026」

- Source: AddyOsmani.com

- Date: 2026-01-04

My LLM coding workflow going into 2026

AI coding assistants became game-changers this year, but harnessing them effectively takes skill and structure. Here's my workflow for planning, coding, and ...

addyosmani.com

addyosmani.com

Google ChromeチームのAddy OsmaniがAI支援コーディングのワークフローを公開。「AI-augmented software engineering」として、spec.mdの事前作成、小さなチャンクでの反復、頻繁なコミット、人間による監視を推奨。Claude Codeの自身のコードベースの約90%がAI生成であることに言及。

“The developer + AI duo is far more powerful than either alone.”

Microsoft MCP セキュリティ&ガバナンス

- Source: Microsoft Inside Track Blog

- Date: 2026-02-12

Protecting AI conversations at Microsoft with Model Context Protocol security and governance - Inside Track Blog

Discover how we’re streamlining MCP governance through secure-by-default architecture, automation, and inventory at Microsoft.

www.microsoft.com

www.microsoft.com

MicrosoftがMCPデプロイメントのセキュリティ・ガバナンスフレームワークを実装中。secure-by-defaultアーキテクチャ、自動化、インベントリ管理の3本柱で「より速く、より安全なエージェント開発環境」の実現を目指す。

Reddit AI Codingツール評価(2026年2月更新)

- Source: Reddit / aitooldiscovery.com

- Date: 2026-02-17更新

Best AI for Coding: Reddit's Top Picks for 2026 | Developers' Choice

Best AI coding assistants per Reddit developers in 2026. Claude Opus 4.6 vs GitHub Copilot vs Cursor vs Codeium compared by r/programming and r/learnprogramming communities.

www.aitooldiscovery.com

www.aitooldiscovery.com

Redditコミュニティによる2026年AIコーディングツールランキング:1位 Claude Opus 4.6(★4.9)、2位 Cursor(★4.8)、3位 GitHub Copilot(★4.7)。開発者の生産性向上は20-50%と報告。

“Opus 4.6 solved in one pass what 4.5 needed 3 attempts for.”

2026年AIコーディングエージェント比較(Faros.ai)

- Source: Faros.ai

- Date: 2026-01-02(2026-01-30更新)

Best AI Coding Agents for 2026: Real-World Developer Reviews | Faros AI

A developer-focused look at the best AI coding agents in 2026, comparing Claude Code, Cursor, Codex, Copilot, Cline, and more—with guidance for evaluating them at enterprise scale.

www.faros.ai

www.faros.ai

フロントランナー:Cursor、Claude Code、Codex、GitHub Copilot(Agent Mode)、Cline。評価軸はトークン効率&コスト、生産性インパクト、コード品質&ハルシネーション制御、リポジトリ理解、プライバシー&データ制御の5項目。

“It’s incredibly exhausting trying to get these models to operate correctly.” — 開発者の声

- Source: Verdent.ai

- Date: 2026-02-04

AI Coding Tools Comparison 2026: Agents, IDEs & Multi-Agent Platforms

2026 AI coding tools compared: agents (Tonkotsu, Devin), IDEs (Cursor, Windsurf), assistants (Copilot). Find the right tool for your workflow.

www.verdent.ai

www.verdent.ai

AIコーディングツールがアシスタント→エージェント→マルチエージェントプラットフォームへ進化。Claude Codeは「Extended Thinking」による高品質コード生成、CursorはComposerモードのマルチファイル編集が強み。隠れたコスト(クレジットシステムとレート制限)への警告も。

“Credit systems and rate limits are the hidden costs that marketing materials skip—track usage religiously.”

Vibe Coding時代のデータ分析書籍リスト(はてなブログ)

- Source: tjo.hatenablog.com

- Date: 2026-02-05

2026年版:生成AIでvibe codingの時代にこそお薦めしたい、データ分析を仕事にするなら読んでおくべき書籍リスト - 渋谷駅前で働くデータサイエンティストのブログ

今年も推薦書籍リスト記事の季節がやってまいりました。ということで、早速いってみようと思います。 昨年までとの差異ですが、まず陳腐化が極めて著しい定番テキストの一部をリストから除外しました。理由は簡単で、「そんなの生成AIに聞けばいくらでも教えてくれるじゃん」というケースがチラホラ見られるのと、近年の他書でも基礎事項として当該テキストで触れられている内容が網羅されてしまっているケースが散見されるためです。 また、vibe codingが普及してきたことで「事実上データ分析に特化したコーディングを学ぶ必要がなくなった」というのも事実で、昨年にもまして「しっかり理論やアルゴリズムを解説している」系の…

tjo.hatenablog.com

tjo.hatenablog.com

Vibe codingの普及により専門的なコーディングスキルが不要になりつつある現状を踏まえ、理論・アルゴリズムの基礎を重視した書籍リストを更新。実装はAIに任せ、概念理解に注力する学習戦略への転換を提唱。

「vibe codingの普及で、専門的なデータ分析コーディングスキルはほぼ不要になった」

Claude CodeとOllamaでローカルVibe Coding(はてなブログ)

- Source: touch-sp.hatenablog.com

- Date: 2026-01-19(2026-02-04更新)

Claude Code と Ollama を使ってローカル環境で Vibe Coding - パソコン関連もろもろ

はじめに 以前、Continue CLIとOllamaの組み合わせで記事を書きました。 touch-sp.hatenablog.com 今回はClaude CodeとOllamaを組み合わせてみます。 Windows 11環境で試しています。 手順 Claude Codeのインストール PowerShellを使っています。 irm https://claude.ai/install.ps1 | iex 開始 Ollamaから開始 ollama launch claude モデル選択画面が出てきます。 Claudeから開始 環境変数の設定 以下の設定は一時的な設定です。 $env:ANTHROP…

touch-sp.hatenablog.com

touch-sp.hatenablog.com

Windows 11環境でClaude CodeとOllamaを組み合わせたローカルVibe Codingの実践レポート。Agent Skillsは明示的に指示しないと使用されない点、VRAM解放のためのollama stopコマンドの必要性など、実務的な知見を共有。

「skillsを使って下さいと明示しないとなかなか使ってくれませんでした」

Vibe Codingで法令検索MCPサーバーを作成(Zenn / GovTech Tokyo)

- Source: Zenn

- Date: 2025-06-04

Vibe Coding で法令検索MCPサーバーを作ってみた

zenn.dev

zenn.dev

GovTech Tokyo職員がClaude Codeでe-Gov法令検索API連携のMCPサーバーを構築。コーディング経験の少ない人がVibe Codingで実用的なツールを作れることを実証。MCPのJSON通信でstdoutにログを出すとエラーになる点など実践的ノウハウも。

「MCPプロトコルではJSON通信を使うため、標準出力にログを出すとJSON解析エラーが発生してしまいます」

Opus 4.6 vs Codex 5.3(Interconnects)

- Source: Interconnects

- Date: 2026-02-09

Opus 4.6, Codex 5.3, and the post-benchmark era

On comparing models in 2026.

www.interconnects.ai

www.interconnects.ai

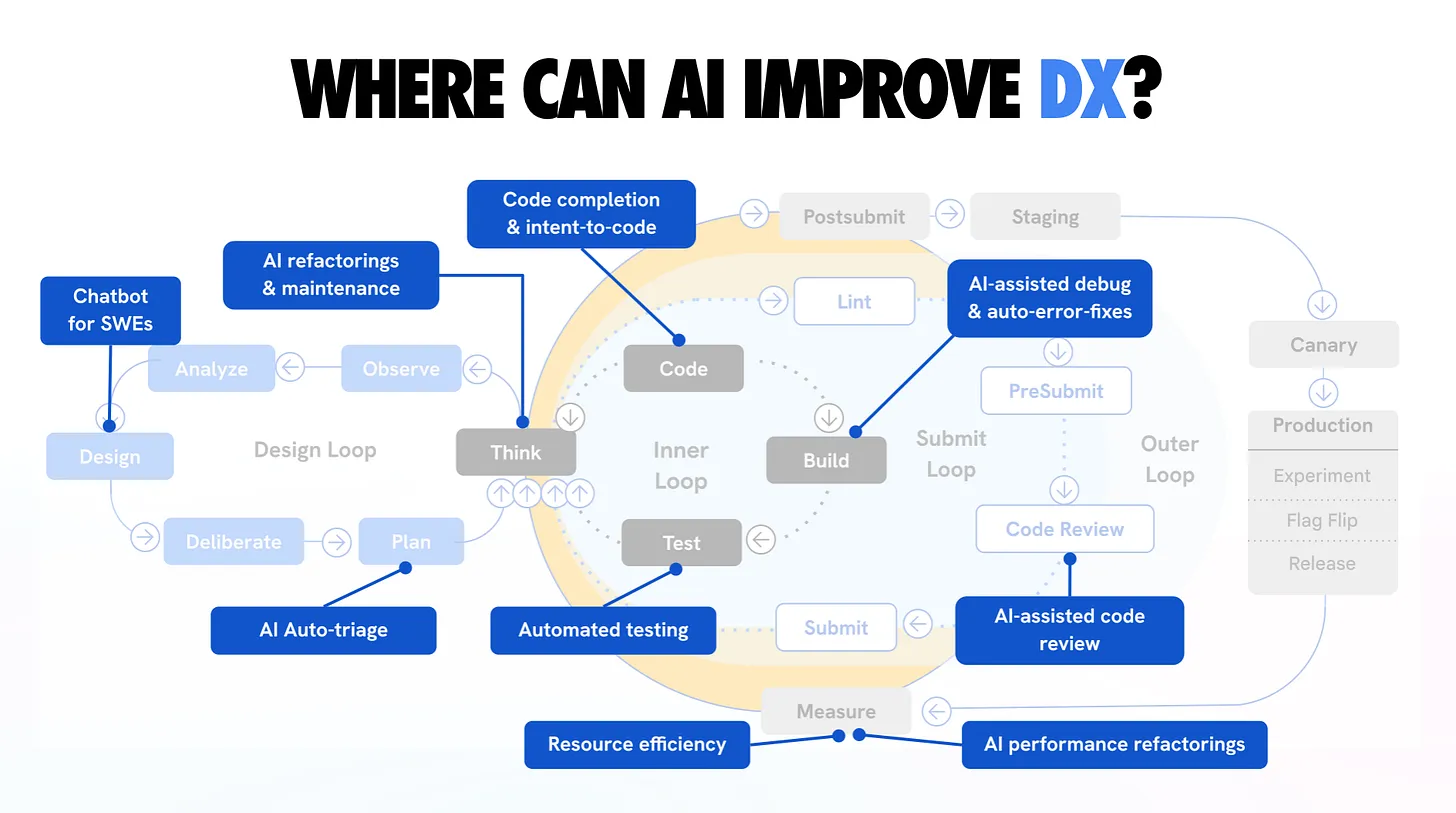

Opus 4.6はユーザビリティと幅広いタスク対応力で優位、Codex 5.3はバグ発見・修正の専門性で優位。従来のベンチマークではモデル品質を意味のある形で示せなくなっており、実際の使用感が差を分ける時代に。将来はモデルの生の能力よりエージェントオーケストレーションとツールアクセスが競争軸に。

“Benchmark-based release reactions barely matter.”

Research Papers

AIDev: Studying AI Coding Agents on GitHub

- Source: arXiv cs.SE

- Date: 2026-02-09

AIDev: Studying AI Coding Agents on GitHub

AI coding agents are rapidly transforming software engineering by performing tasks such as feature development, debugging, and testing. Despite their growing impact, the research community lacks a comprehensive dataset capturing how these agents are used in real-world projects. To address this gap, we introduce AIDev, a large-scale dataset focused on agent-authored pull requests (Agentic-PRs) in real-world GitHub repositories. AIDev aggregates 932,791 Agentic-PRs produced by five agents: OpenAI Codex, Devin, GitHub Copilot, Cursor, and Claude Code. These PRs span 116,211 repositories and involve 72,189 developers. In addition, AIDev includes a curated subset of 33,596 Agentic-PRs from 2,807 repositories with over 100 stars, providing further information such as comments, reviews, commits, and related issues. This dataset offers a foundation for future research on AI adoption, developer productivity, and human-AI collaboration in the new era of software engineering.

> AI Agent, Agentic AI, Coding Agent, Agentic Coding, Agentic Software Engineering, Agentic Engineering

arxiv.org

arxiv.org

5つのAIコーディングエージェント(OpenAI Codex, Devin, GitHub Copilot, Cursor, Claude Code)が生成した932,791件のPRを116,211リポジトリから収集した大規模データセット。AIエージェントの採用パターンと開発者との協調ダイナミクスを実証的に分析する基盤を提供。

“AIDev aggregates 932,791 Agentic-PRs produced by five agents spanning 116,211 repositories and involving 72,189 developers.”

On the Impact of AGENTS.md Files on the Efficiency of AI Coding Agents

- Source: arXiv cs.SE

- Date: 2026-01-28

On the Impact of AGENTS.md Files on the Efficiency of AI Coding Agents

AI coding agents such as Codex and Claude Code are increasingly used to autonomously contribute to software repositories. However, little is known about how repository-level configuration artifacts affect operational efficiency of the agents. In this paper, we study the impact of AGENTS$.$md files on the runtime and token consumption of AI coding agents operating on GitHub pull requests. We analyze 10 repositories and 124 pull requests, executing agents under two conditions: with and without an AGENTS$.$md file. We measure wall-clock execution time and token usage during agent execution. Our results show that the presence of AGENTS$.$md is associated with a lower median runtime ($Δ28.64$%) and reduced output token consumption ($Δ16.58$%), while maintaining a comparable task completion behavior. Based on these results, we discuss immediate implications for the configuration and deployment of AI coding agents in practice, and outline a broader research agenda on the role of repository-level instructions in shaping the behavior, efficiency, and integration of AI coding agents in software development workflows.

arxiv.org

arxiv.org

AGENTS.mdファイルの存在によりAIエージェントの実行時間が中央値で28.64%短縮、出力トークン消費が16.58%削減されることを実証。タスク完了率は同等を維持しつつ効率化を実現。即座に実務で活用可能な知見。

“The presence of AGENTS.md is associated with a lower median runtime (Delta 28.64%) and reduced output token consumption (Delta 16.58%).”

SMCP: Secure Model Context Protocol

- Source: arXiv cs.CR

- Date: 2026-02-01

SMCP: Secure Model Context Protocol

Agentic AI systems built around large language models (LLMs) are moving away from closed, single-model frameworks and toward open ecosystems that connect a variety of agents, external tools, and resources. The Model Context Protocol (MCP) has emerged as a standard to unify tool access, allowing agents to discover, invoke, and coordinate with tools more flexibly. However, as MCP becomes more widely adopted, it also brings a new set of security and privacy challenges. These include risks such as unauthorized access, tool poisoning, prompt injection, privilege escalation, and supply chain attacks, any of which can impact different parts of the protocol workflow. While recent research has examined possible attack surfaces and suggested targeted countermeasures, there is still a lack of systematic, protocol-level security improvements for MCP. To address this, we introduce the Secure Model Context Protocol (SMCP), which builds on MCP by adding unified identity management, robust mutual authentication, ongoing security context propagation, fine-grained policy enforcement, and comprehensive audit logging. In this paper, we present the main components of SMCP, explain how it helps reduce security risks, and illustrate its application with practical examples. We hope that this work will contribute to the development of agentic systems that are not only powerful and adaptable, but also secure and dependable.

arxiv.org

arxiv.org

MCPのセキュリティギャップに対処するSMCPを提案。統一ID管理、相互認証、セキュリティコンテキスト伝播、きめ細かいポリシー適用、監査ログを統合。ツールポイゾニング、プロンプトインジェクション、サプライチェーン攻撃への防御策を提供。

“SMCP incorporates unified identity management, robust mutual authentication, ongoing security context propagation, fine-grained policy enforcement, and comprehensive audit logging.”

Does SWE-Bench-Verified Test Agent Ability or Model Memory?

- Source: arXiv cs.SE

- Date: 2025-12-11

Does SWE-Bench-Verified Test Agent Ability or Model Memory?

SWE-Bench-Verified, a dataset comprising 500 issues, serves as a de facto benchmark for evaluating various large language models (LLMs) on their ability to resolve GitHub issues. But this benchmark may overlap with model training data. If that is true, scores may reflect training recall, not issue-solving skill. To study this, we test two Claude models that frequently appear in top-performing agents submitted to the benchmark. We ask them to find relevant files using only issue text, and then issue text plus file paths. We then run the same setup on BeetleBox and SWE-rebench. Despite both benchmarks involving popular open-source Python projects, models performed 3 times better on SWE-Bench-Verified. They were also 6 times better at finding edited files, without any additional context about the projects themselves. This gap suggests the models may have seen many SWE-Bench-Verified tasks during training. As a result, scores on this benchmark may not reflect an agent's ability to handle real software issues, yet it continues to be used in ways that can misrepresent progress and lead to choices that favour agents that use certain models over strong agent design. Our setup tests the localization step with minimal context to the extent that the task should be logically impossible to solve. Our results show the risk of relying on older popular benchmarks and support the shift toward newer datasets built with contamination in mind.

arxiv.org

arxiv.org

SWE-Bench-Verifiedがエージェント能力ではなく訓練データの記憶を反映している可能性を指摘。Claudeモデルの実験で、SWE-Bench-Verifiedでの性能がBeetleBox/SWE-rebenchの3倍、編集ファイル特定は6倍高いことが判明。新しいベンチマークへの移行を提唱。

“Models performed 3 times better on SWE-Bench-Verified compared to BeetleBox and SWE-rebench, and were 6 times better at finding edited files.”

Vibe Coding Kills Open Source

- Source: arXiv cs.SE

- Date: 2026-01-21

Vibe Coding Kills Open Source

Generative AI is changing how software is produced and used. In vibe coding, an AI agent builds software by selecting and assembling open-source software (OSS), often without users directly reading documentation, reporting bugs, or otherwise engaging with maintainers. We study the equilibrium effects of vibe coding on the OSS ecosystem. We develop a model with endogenous entry and heterogeneous project quality in which OSS is a scalable input into producing more software. Users choose whether to use OSS directly or through vibe coding. Vibe coding raises productivity by lowering the cost of using and building on existing code, but it also weakens the user engagement through which many maintainers earn returns. When OSS is monetized only through direct user engagement, greater adoption of vibe coding lowers entry and sharing, reduces the availability and quality of OSS, and reduces welfare despite higher productivity. Sustaining OSS at its current scale under widespread vibe coding requires major changes in how maintainers are paid.

arxiv.org

arxiv.org

Vibe codingがOSSエコシステムに与える影響を経済学的にモデル化。生産性は向上するが、直接的なユーザーエンゲージメントのみで収益化されるOSSではメンテナーの参入・共有が減少し、OSSの可用性と品質が低下するパラドックスを発見。

“When OSS is monetized only through direct user engagement, greater adoption of vibe coding lowers entry and sharing, reduces the availability and quality of OSS.”

EvoCodeBench: Self-Evolving LLM-Driven Coding Systems

- Source: arXiv cs.CL

- Date: 2026-02-10

EvoCodeBench: A Human-Performance Benchmark for Self-Evolving LLM-Driven Coding Systems

As large language models (LLMs) continue to advance in programming tasks, LLM-driven coding systems have evolved from one-shot code generation into complex systems capable of iterative improvement during inference. However, existing code benchmarks primarily emphasize static correctness and implicitly assume fixed model capability during inference. As a result, they do not capture inference-time self-evolution, such as whether accuracy and efficiency improve as an agent iteratively refines its solutions. They also provide limited accounting of resource costs and rarely calibrate model performance against that of human programmers. Moreover, many benchmarks are dominated by high-resource languages, leaving cross-language robustness and long-tail language stability underexplored. Therefore, we present EvoCodeBench, a benchmark for evaluating self-evolving LLM-driven coding systems across programming languages with direct comparison to human performance. EvoCodeBench tracks performance dynamics, measuring solution correctness alongside efficiency metrics such as solving time, memory consumption, and improvement algorithmic design over repeated problem-solving attempts. To ground evaluation in a human-centered reference frame, we directly compare model performance with that of human programmers on the same tasks, enabling relative performance assessment within the human ability distribution. Furthermore, EvoCodeBench supports multiple programming languages, enabling systematic cross-language and long-tail stability analyses under a unified protocol. Our results demonstrate that self-evolving systems exhibit measurable gains in efficiency over time, and that human-relative and multi-language analyses provide insights unavailable through accuracy alone. EvoCodeBench establishes a foundation for evaluating coding intelligence in evolving LLM-driven systems.

arxiv.org

arxiv.org

反復的にコードを改善する自己進化型LLMコーディングシステムを評価するベンチマーク。従来のワンショット正確性ではなく、複数試行にわたる正確性・時間・メモリ効率の進化を追跡し、人間プログラマーとの直接比較を実現。

“Unlike traditional benchmarks focusing on one-shot accuracy, EvoCodeBench tracks how performance evolves across multiple solving attempts.”

Key Takeaways

-

3大プラットフォームの「エージェント戦争」が本格化 — Anthropic(Claude Code + Agent Teams)、OpenAI(Codex Desktop + Skills)、Google(Antigravity IDE)がそれぞれ独自のマルチエージェント開発環境を投入。競争軸はモデル性能からエージェントオーケストレーションとツールエコシステムへシフト。

-

MCPがAIインフラのHTTPになりつつある — Linux Foundation傘下のAAIFへの寄贈、GoogleのgRPCサポート、Microsoftのセキュリティガバナンス、月間9,700万SDKダウンロード。MCPは「もう1つのwebサーバーを動かすのと同じくらい普及」した標準プロトコルに成長。

-

Vibe Codingの光と影が明確に — 非プログラマーの参入障壁を下げる一方、OSSエコシステムへの経済的脅威や「速いが欠陥のある」コード品質問題が学術的にも実証。Addy Osmaniのように「AI-augmented software engineering」として人間の監視を維持する姿勢が主流に。

-

ベンチマーク汚染問題が表面化 — SWE-Bench-Verifiedの結果が実力ではなく記憶の反映である可能性が示され、EvoCodeBenchやProxyWarなど新世代の評価手法が台頭。「ベンチマーク発表への反応はもはや重要でない」という見方も。

-

AGENTS.mdの即効性が実証 — リポジトリにAGENTS.mdを追加するだけでエージェントの実行時間を約30%短縮可能。最小限の投資で最大の効率化を得られる、今すぐ実践可能な知見。

Generated by tech-trends-newsletter skill

KJR020's Blog

KJR020's Blog